[ad_1]

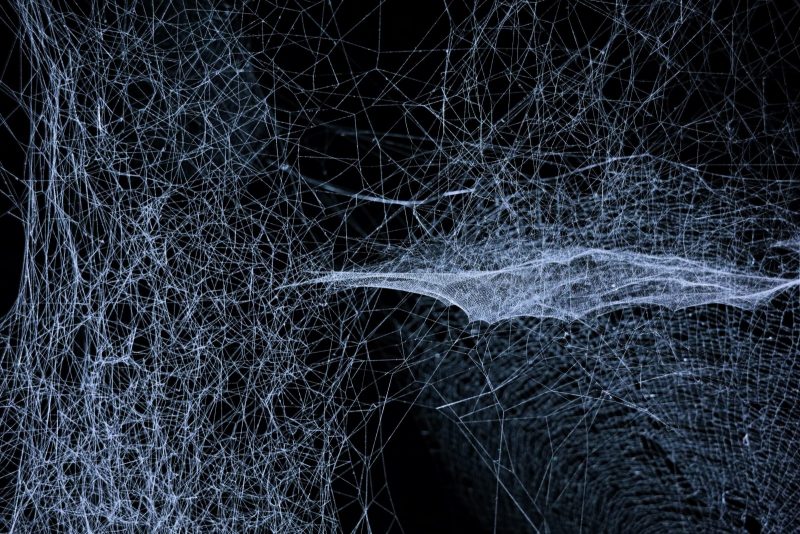

We all know knowledge is the new oil. But ahead of it offers us the prosperity of intelligence we are after, it wants to be dug out and prepared. This is exactly what information preprocessing is all about.

Understanding the Importance of Knowledge Preprocessing

Providers choose info from a assortment of sources and in a substantial range of varieties. It can be unstructured, indicating texts, illustrations or photos, audio documents, and videos, or structured, meaning purchaser connection management (CRM), invoicing techniques or databases. We phone it uncooked details – facts processing answers unprocessed data that may possibly comprise some inconsistencies and does not have a standard variety which can be employed straight away.

To analyse it using equipment studying, and consequently to make big use of it in all areas of company, it wants to be cleaned and organised –preprocessed, in 1 phrase.

So, what is info preprocessing? As these kinds of, info preprocessing is a essential move in info investigation and equipment discovering pipeline. It will involve transforming raw, normally structured data into a structure that is suited for more assessment or education machine discovering products with the purpose of bettering details quality, address lacking values, manage outliers, normalise knowledge and decrease dimensionality.

Its primary gains involve:

Details preprocessing can help recognize and take care of problems these as problems and inconsistencies in raw information, ensuing in considerably improved excellent of facts, which by eradicating duplicates, correcting problems and addressing lacking values becomes a lot more precise and responsible.

Raw data often have missing values, which can pose issues all through investigation or modelling. Facts preprocessing consists of imputation (replacing lacking values with approximated values) and deletion (eradicating circumstances or features with lacking knowledge), which tackle that issue.

- Outlier detection and dealing with

Outlier usually means information factors that drastically deviate from the usual designs on a dataset – they can be a final result of glitches, anomalies, or rare activities. Information preprocessing aids to recognize and take care of them by removing or reworking them or managing them individually based on the evaluation or model’s demands.

- Normalisation and scaling

Normalisation of information guarantees all characteristics have equivalent ranges and distributions, blocking specified options from dominating others throughout examination or modeling. Scaling provides the facts within a unique range, creating it far more suitable also for device learning algorithms.

Significant dimensional datasets can pose challenges for assessment and modeling, primary to enhanced computational complexity and the possibility of overfitting. Dimensionality reduction allows to decrease the variety of options though retaining the most suitable details, which simplifies the knowledge illustration and can boost design efficiency.

Attribute engineering will involve creating new characteristics from present kinds or reworking options to make improvements to their relevance or illustration, encouraging capture essential styles or interactions in the data that could be missed by raw characteristics by yourself, primary to a lot more effective versions.

Diverse machine discovering algorithms have particular assumptions and needs about the input facts. Details preprocessing makes certain that the facts is in a suited format and adheres to the assumptions of the chosen model.

Preprocessing makes sure that data utilised for analysis is accurate, consistent, and representative, foremost to extra dependable and meaningful insights. It lessens the danger of drawing incorrect conclusions or earning flawed conclusions because of to details difficulties.

The Facts Preprocessing Method and Main Measures

The info preprocessing approach commonly entails many key ways to rework uncooked info into a clear format, appropriate for evaluation or device finding out. Whilst the techniques could vary dependent on the dataset and the particular needs of the examination or modeling process, the most popular important ways in info preprocessing consist of:

The to start with phase is to assemble the raw details from numerous resources, these as databases, files, or APIs. The info assortment process can include extraction, scraping, or downloading knowledge.

Info Cleaning

This phase focuses on figuring out and dealing with errors, inconsistencies, or outliers in the info. It involves duties these kinds of as:

- eliminating copy documents – identifying and eliminating equivalent or almost identical entries

- correcting glitches – identifying and correcting any glitches or inconsistencies in the knowledge

- dealing with lacking facts – addressing lacking values in the dataset, possibly by imputing estimated values or thinking about missingness as a separate category

- managing outliers – detecting and handling outliers by possibly taking away them, reworking them, or dealing with them individually, centered on the investigation or model demands.

Info Transformation

In this stage, info is reworked into a acceptable structure to increase its distribution, scale, or representation. Transformations dependent on details integrated in facts should really be performed prior to the educate-examination break up, on instruction data, just after which transformation can be moved to the examination established straight away. Some frequent facts transformation approaches involve:

- aspect scaling – scaling the numerical characteristics to a typical scale, this sort of as standardisation or min-max scaling

- normalisation – ensuring that all characteristics have similar ranges and distributions, protecting against specified features from dominating other folks in the course of investigation or modeling

- encoding categorical variables – converting categorical variables into numerical representations that can be processed by equipment understanding algorithms. This can involve tactics like one-very hot encoding, label encoding, or ordinal encoding

- text preprocessing – for textual knowledge, responsibilities like tokenisation, taking away prevent terms, stemming or lemmatisation, and dealing with particular people or symbols may well be performed

- embedding – meaning symbolizing textual facts in a numerical format.

Function Variety / Extraction

In this stage, the most applicable features are picked or extracted from the dataset. The objective is to cut down the dimensionality of the info or select the most insightful attributes applying techniques like principal ingredient analysis (PCA), recursive aspect elimination (RFE), or correlation examination.

If numerous datasets are offered, this stage will involve combining or merging them into a one dataset, aligning the details based mostly on typical characteristics or keys.

It is frequent apply to break up the dataset into coaching, validation, and check sets. The teaching set is applied to teach the design, the validation set can help in tuning design parameters, and the take a look at established is employed to assess the final model’s efficiency. The information splitting makes certain impartial evaluation and stops overfitting.

Dimensionality reduction is used to lessen the range of capabilities or variables in a dataset though preserving the most relevant information and facts. Its key added benefits contain improved computational efficiency, mitigating the hazard of overfitting and simplifying info visualisation.

Summary: Knowledge Preprocessing Definitely Pays Off

By executing effective data preprocessing, analysts and information experts can increase the quality, dependability, and suitability of the information for analysis or model instruction. It helps mitigating popular issues, increasing model efficiency, and obtaining a lot more meaningful insights from the details, which all engage in a critical purpose in details assessment and equipment learning responsibilities. It also aids unlock the real opportunity of the details, facilitating precise conclusion-creating, and in the end maximising the worth derived from the facts.

After facts preprocessing, it is well worth working with Element Shop – a central area for holding preprocessed details, which makes it out there for reuse. Such a system will save cash and helps handling all get the job done.

To make the most out of your details property and learn a lot more about the worth of your details, get in touch with our workforce of specialists, completely ready to reply your thoughts and to information you on facts processing providers for your enterprise. At Future Processing we offer a complete data alternative which will allow you to remodel your raw info into intelligence, serving to you make educated small business conclusions at all occasions.

By Aleksandra Sidorowicz

[ad_2]

Resource url